Colloquia and Seminars

Explore our exciting world of mathematical exploration and discovery through our colloquia

and seminars, where you can engage with experts, share ideas, and celebrate the beauty

of mathematics.

Colloquia

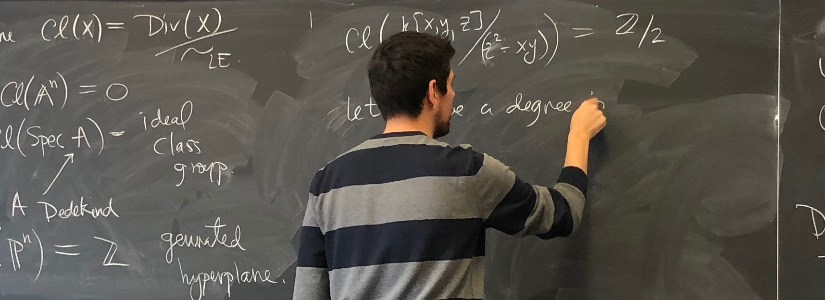

A mathematics colloquium is a dynamic gathering where experts in various mathematical

disciplines present their latest research and findings. These events offer a unique

opportunity to delve into cutting-edge mathematical concepts, explore innovative problem-solving

approaches, and gain insights into the forefront of mathematical knowledge. Expect

engaging presentations, lively discussions, and a chance to connect with fellow math

enthusiasts, all in an environment that encourages intellectual curiosity and fosters

collaboration.

Seminars

A mathematics seminar is an intimate and interactive forum for in-depth exploration

of specific mathematical topics. In these sessions, participants, including experts

and enthusiasts alike, engage in detailed discussions, problem-solving, and the exchange

of ideas related to a particular area of mathematics. Seminars often provide a platform

for presenting and analyzing ongoing research projects, allowing attendees to gain

profound insights and contribute to the advancement of mathematical knowledge.

We have seminars in many disciplines. Select the discipline you are interested in

to view its seminar schedule.